A loop runner you can deploy.

Generate → compile → benchmark → verify, packaged as a single engine that runs continuously over every priority workload, not as a one-off research script.

Turning silicon into production AI performance through continuous learning.

Every chip generation resets the optimization clock. Each one needs hand-tuned kernels by a small bench of specialists, long before software catches up to silicon.

New architectures require repeated optimization before software reaches peak performance.

Manual CUDA/PTX work depends on scarce specialists and slow iteration.

Standard libraries miss custom ops, fused workloads, and unusual shapes.

Generated kernels remain hard to deploy, monitor, and maintain in real systems.

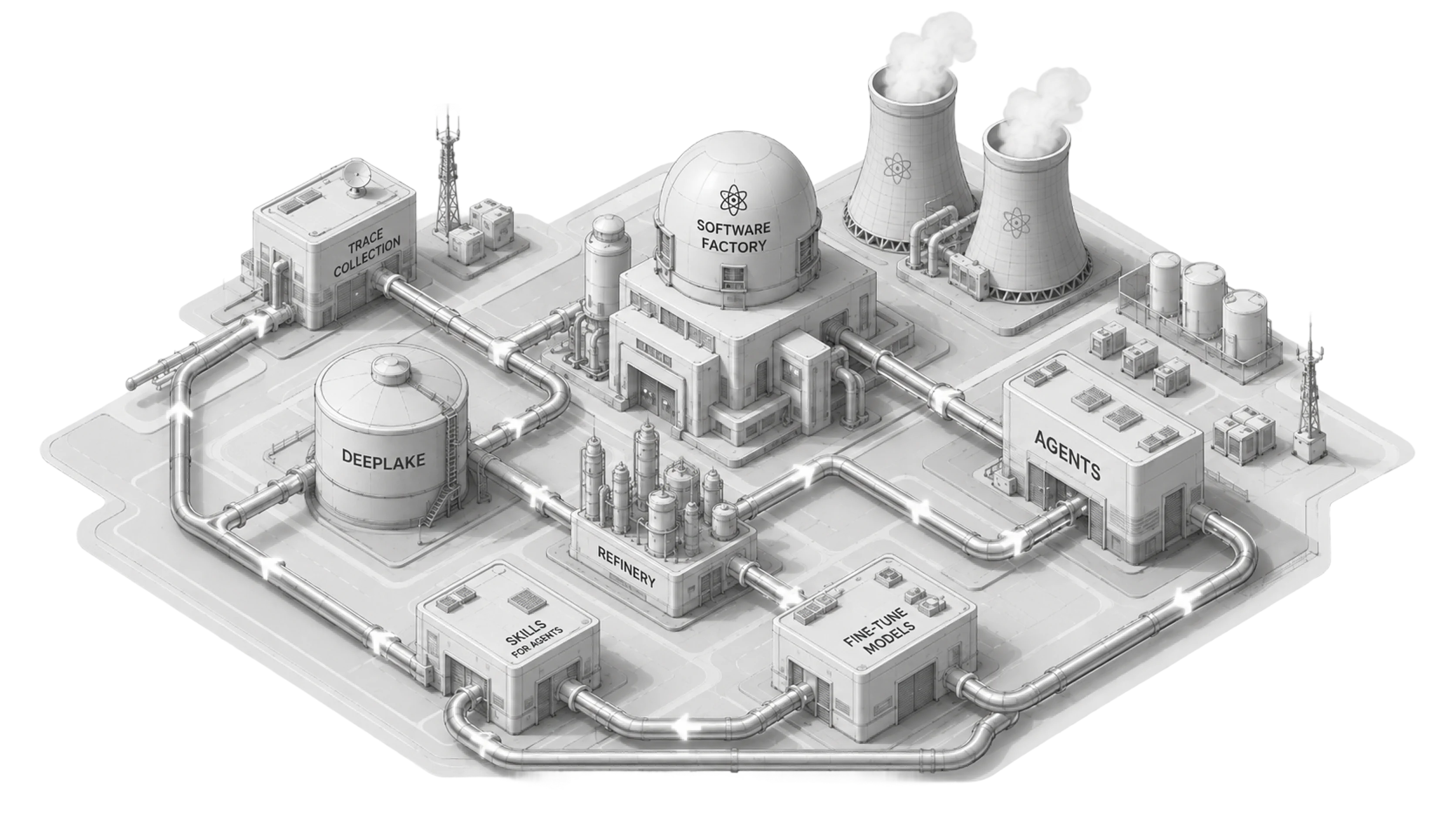

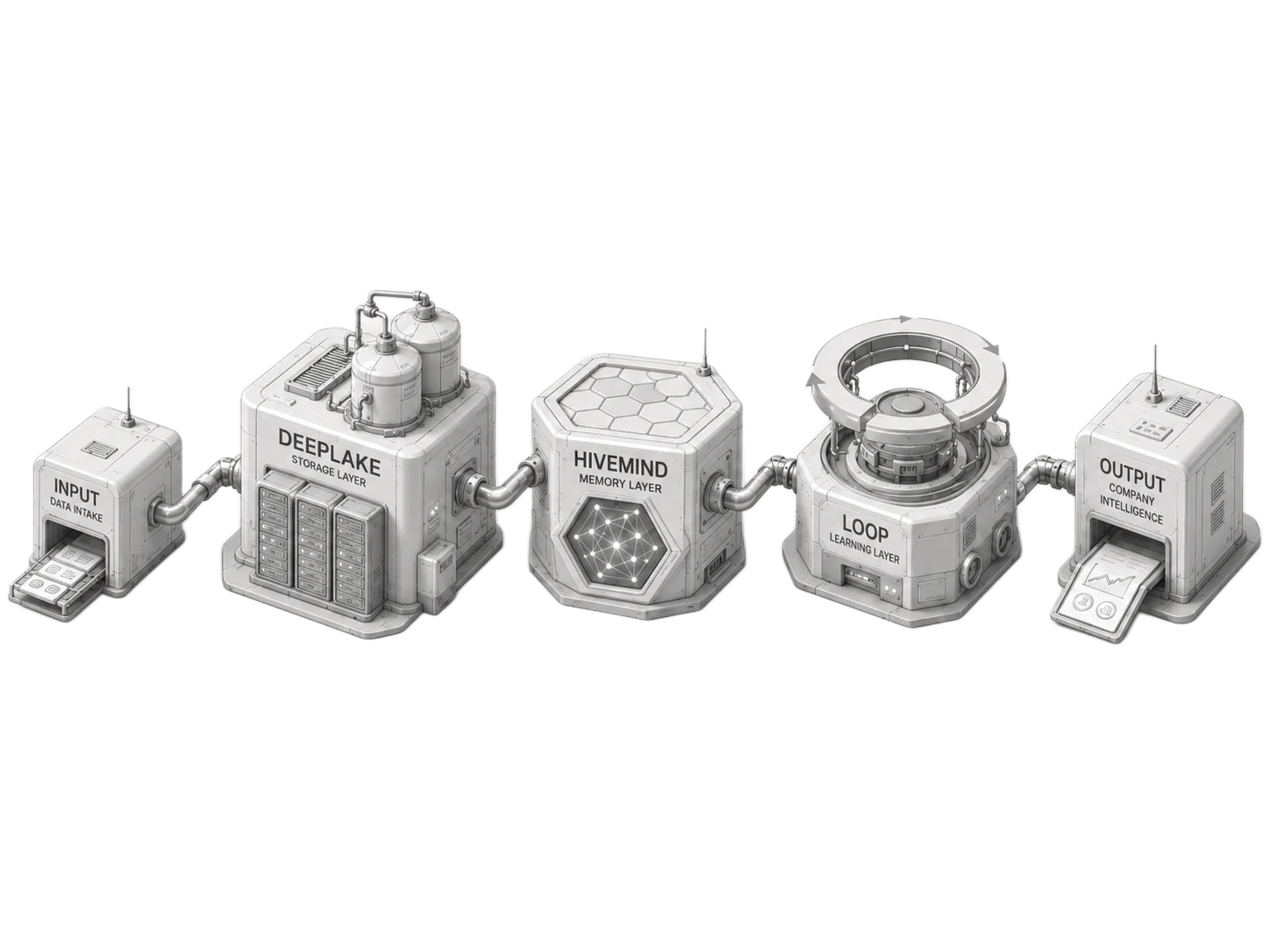

Loop runs a closed cycle over each priority workload: every generated kernel becomes feedback for the next, and every win raises the floor for everything that follows.

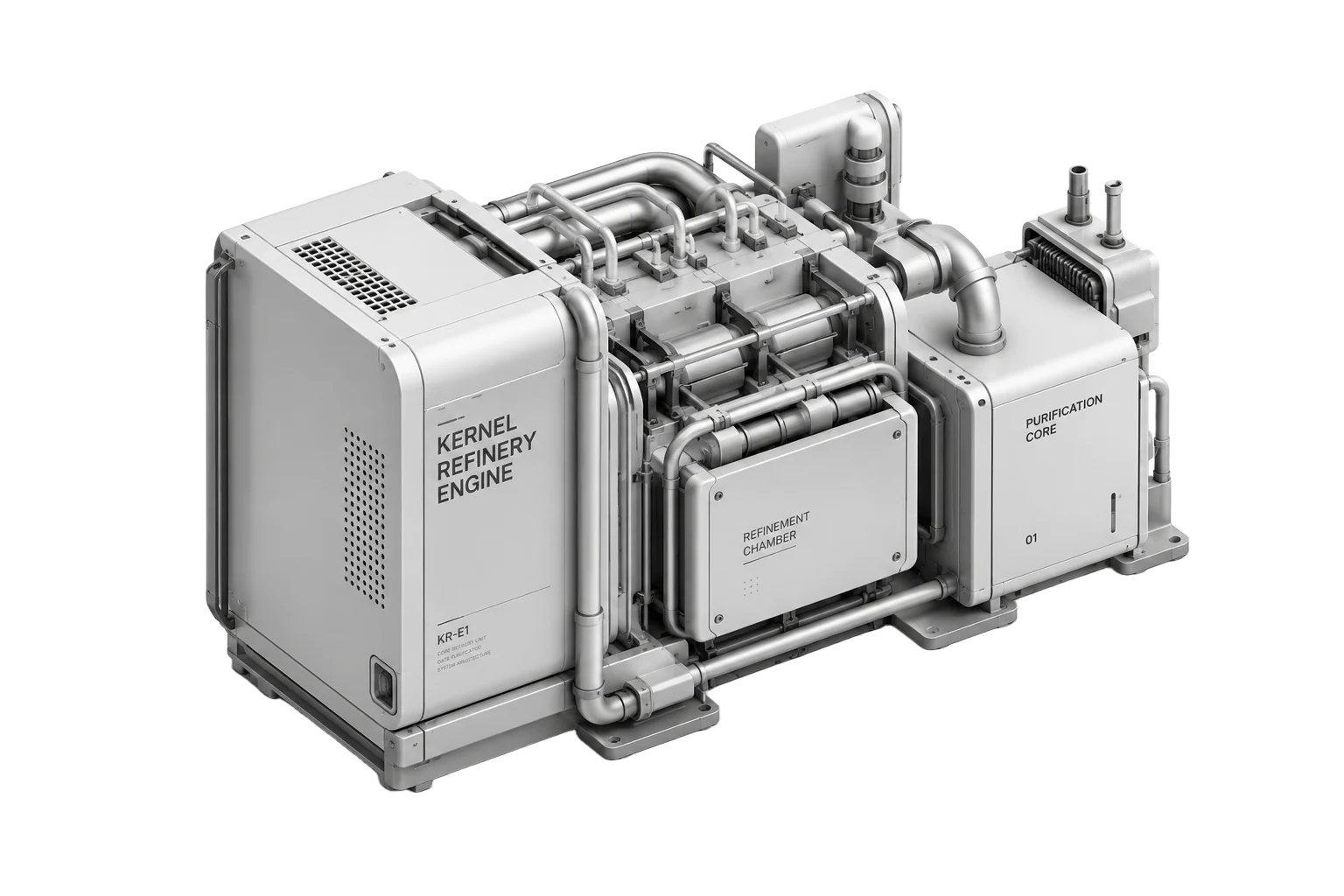

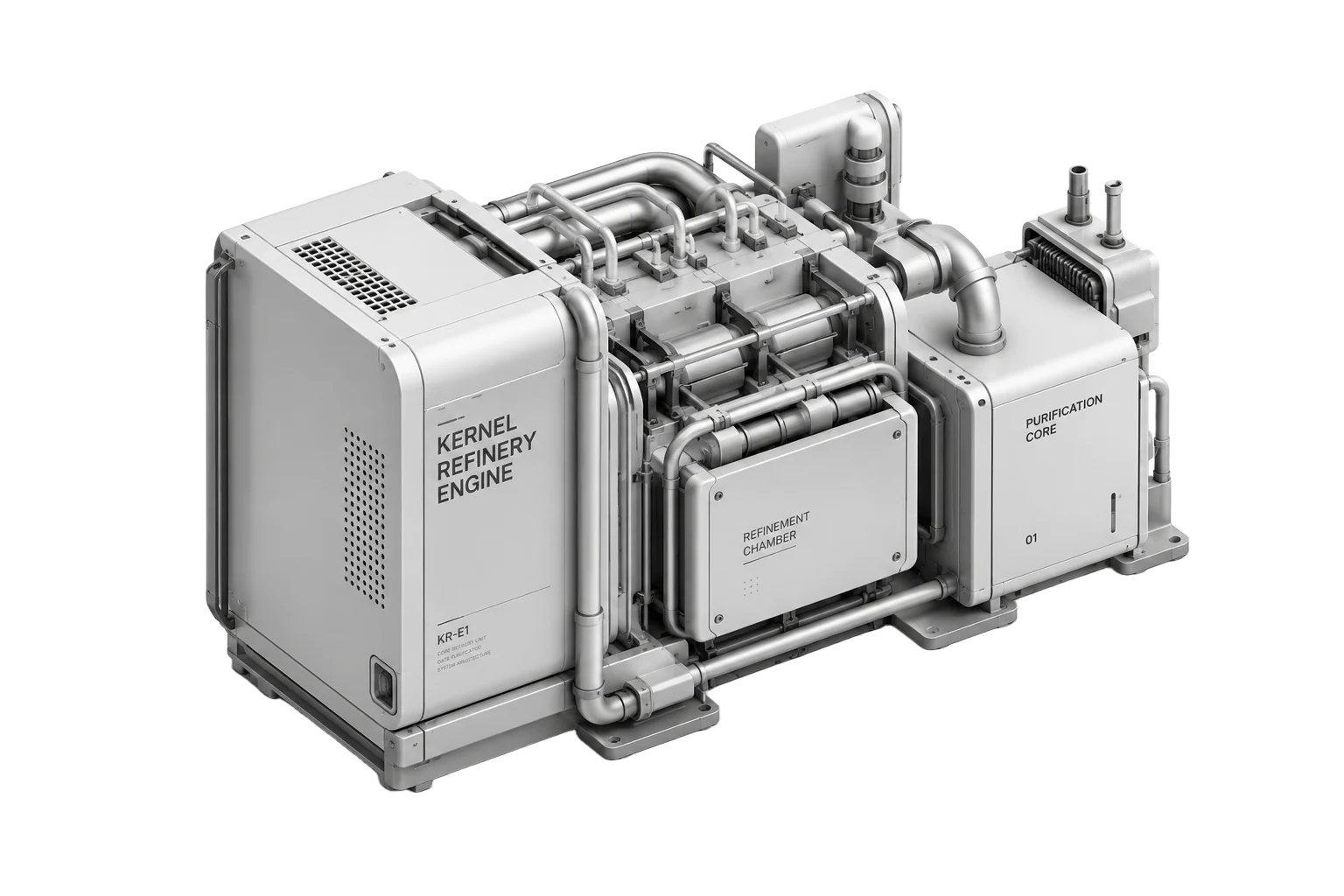

An engine that runs the cycle, telemetry that keeps it grounded in real workloads, and a spec sheet that turns 'faster' into a number you can audit.

Generate → compile → benchmark → verify, packaged as a single engine that runs continuously over every priority workload, not as a one-off research script.

Always-on signal collection from production. Every request, every regression, every win folds back into the loop, so the system learns from how the workload is actually used, not just how it was specced.

Speedup targets, latency budgets, accuracy floors, written into the sprint contract before any code runs and audited at the end. No demos that don't survive production.

Every claim in Loop has a measurement behind it. Step through the receipts: token throughput, kernel runs, autoresearch progress, and the partnership track record.

527 experiments · 17 running-best improvements

Loop is not a research demo. It builds on years of shipped engineering: open-source adoption, F500 production workloads, peer-reviewed research, and co-developed education.

"Physical AI, powered by vision-language-action models (VLAs), enables robots not only to see, but to perceive and reason. Activeloop and Pinkbot achieved 9x faster throughput with Intel Core Ultra Series 3."Source: Intel on X

"In science, sometimes you have to rethink the basics to make progress. Flagship's work with Activeloop has been all about that, getting back to the core of how we store and retrieve data for AI to speed up how we solve really tough scientific problems."

Mark Kim, Flagship Pioneering

Source: Flagship Pioneering case studyBook a demo to review Loop against 3-5 priority workloads. If we hit the agreed speedup targets, convert into a platform partnership.