Data often stands between a state-of-the-art computer vision machine learning project and just another experiment. Unfortunately, there is no widely adopted industry standard for selecting the best, and most relevant benchmarks.

Imagine if you were working on a new computer vision algorithm, how would you select the right benchmark for your algorithm? Would you collect it yourself? Find the benchmark with most citations? How do you deal with licensing or permissioning issues? Where do you host these large datasets?

As a result of all the complications involved in selecting a good benchmark often, you do not achieve the results your model should be capable of. This problem will only increase as the amount of computer vision-generated data is growing in volume and complexity as computer vision datasets are no longer simply cats and dogs. Currently, datasets are becoming more and more sophisticated and include images from complex tasks such as cars driving through cities.

Reflecting on how you go about finding the perfect computer vision dataset for your next machine learning task allows you to vastly improve the results of your computer model. This is the case, as having the right dataset benchmark enables you to evaluate and compare machine learning methods to find the best one for your project.

In machine learning, benchmarking is the practice of comparing tools and platforms to identify the best-performing technologies in the industry. Benchmarking is used to measure performance using a specific indicator resulting in a metric that is then compared to other machine learning methods.

What does having the right dataset benchmark mean?

Now you may be asking yourself, is there such a thing as a good or bad dataset benchmark, and how can you identify one for another? Both are important and underrated questions in the machine learning community.

In this blog post, we will answer these questions to make sure after all the research and work you put into crafting the perfect machine learning model it reaches the potential it is capable of!

Good Benchmarks

Recently, many publicly available real-world and simulated benchmark datasets have emerged from an array of different sources. However, the organization and adoption as standards between the sources have been inconsistent, and consequently, many existing benchmarks lack diversity to effectively benchmark computer vision algorithms.

Good benchmark datasets allow you to evaluate several machine learning methods in a direct and fair comparison. However, a common problem with these benchmarks is that they are not an accurate depiction of the real world.

Consequently, methods ranking high on popular computer vision benchmarks perform below average when tested outside the data or laboratory where they were created in. Simply put, many dataset benchmarks are not an accurate depiction of reality.

Good computer vision benchmark datasets will reflect the setting of the real-world application of the model you are developing. ObjectNet is an example of an image repository purposefully created to avoid the biases found in popular image datasets. The intention behind ObjectNet’s creation was to reflect the realities AI algorithms face in the real world.

Unsurprisingly, when several of the best object detectors were tested on ObjectNet, they encountered a significant performance reduction, indicating a need for better dataset benchmarks to evaluate computer vision systems.

Bad Benchmarks

If good computer vision benchmark datasets provide a fair representation of the real world, can you guess what characterizes a bad computer vision benchmark dataset?

Benchmarks that mainly contain images that have been taken in ideal conditions produce a bias toward the perfect and unrealistic conditions they were made in. Consequently, they are inadequate at handling the messiness found in the real world.

For example, datasets benchmarks made with ImageNet are biased on pictures of objects you would find online in a blog rather than in the real world.

So although ImageNet is a popular dataset for computer vision, the images in its database do not adequately represent reality, and therefore, ImageNet is not the best computer vision benchmark dataset.

What types of dataset benchmarks exist?

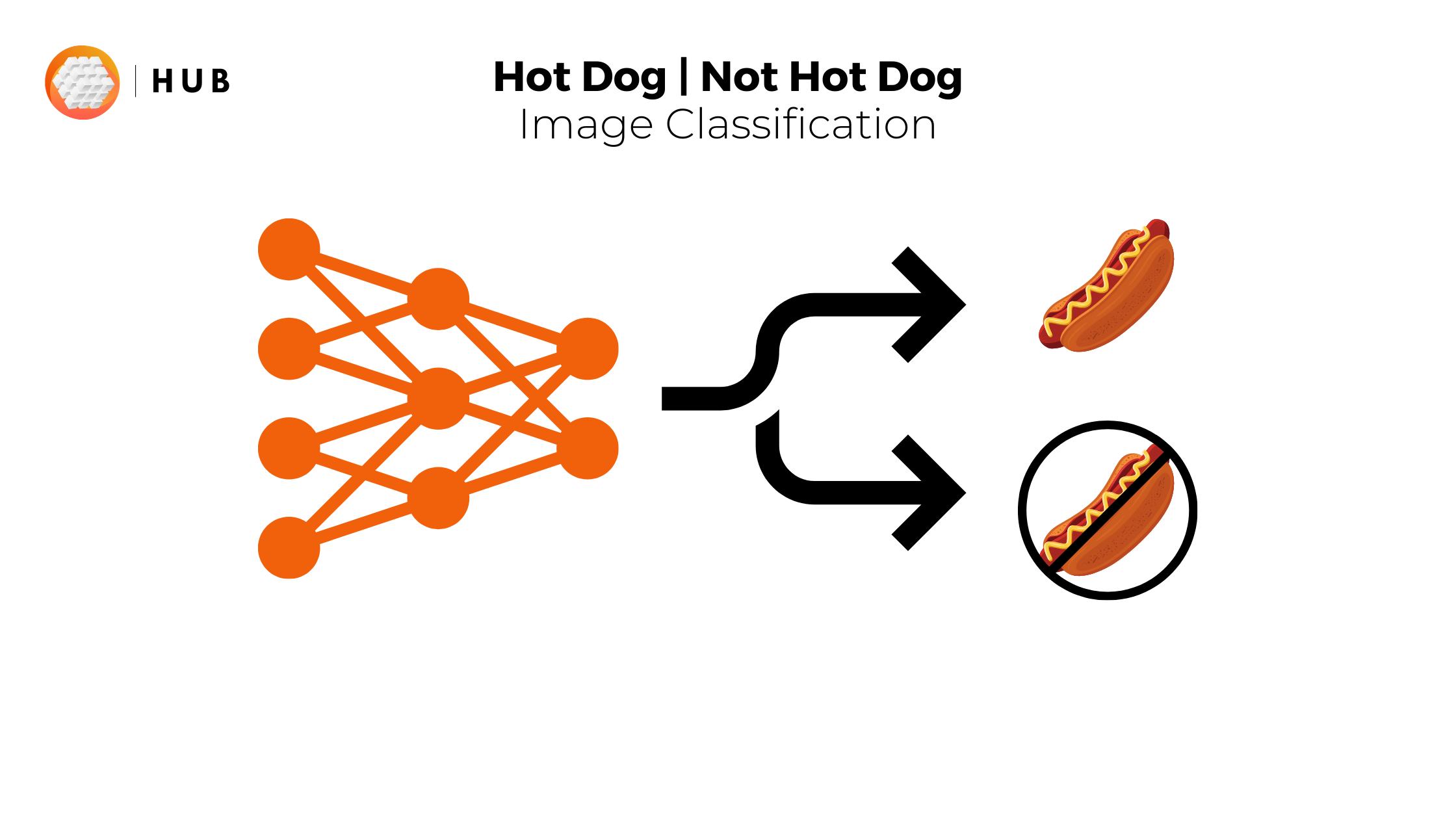

There are many types of dataset benchmarks for different tasks. For example, segmentation, scene understanding, and image classification all require different types of benchmarks.

Although you now know how to distinguish a good benchmark from a bad one on your own we want to equip you with a list of some of the best benchmarks for segmentation, classification, and scene understanding.

Hopefully, with these lists, you can enlighten the world with your computer vision models right away and have a reference to compare benchmarks to in order to get better at classifying benchmarks as either good or bad.

Best dataset benchmarks for segmentation

- The Berkeley Segmentation Dataset and Benchmark (link).

- KITTI semantic segmentation benchmark (link). Checkout the Hub equivalent for the test, train and validation KITTI datasets.

Best dataset benchmarks for classification

- ObjectNet Benchmark Image Classification (link)

Best dataset benchmarks for scene understanding

- Scene Understanding on ADE20K val (link)

- Scene Understanding on Semantic Scene Understanding Challenge Passive Actuation & Ground-truth Localisation (link)

Why are Dataset Benchmarks So Important?

Since there are so many types of dataset benchmarks it is understandably challenging to create enough high-standard benchmarks for each dataset.

However, it is crucial to do so as these benchmarks allow you to see how your machine learning methods learn patterns in a benchmark dataset that has been accepted as being the standard.

But how can you make sure that the benchmark you are using is the right measurement tool when it comes to the performance of machine learning techniques?

It’s no secret that having a computer vision dataset that is an accurate depiction of reality is challenging as datasets lack variety and often depict images or videos in ideal conditions.

Perhaps to make better computer vision machine learning models we need to have more collaboration between organizations when making dataset benchmarks. Else, the most popular datasets will have many benchmarks made for them, while less known ones have little to no benchmarks available.

The trend showcased in the histogram above demonstrates how popular datasets have more benchmarks made for them. Being limited to the most popular datasets due to a lack of benchmarks makes it more difficult to obtain variety and an accurate depiction of reality for datasets used on models.

However, Activeloop, the dataset optimization company, is a solution for making a central and diverse set of benchmark datasets.

Tools like Activeloop allow for dataset collaboration via centralized storage of the dataset and version control, allowing engineers to create the best computer vision dataset benchmark to develop their next state-of-the-art model!

Moreover, delivering valuable insights from unstructured data is difficult, as there is no industry standard for storing unstructured datasets. Activeloop’s simplicity allows many people to use it and therefore is on its way to becoming the industry standard for storing unstructured datasets. Its popularity makes it the obvious tool for creating dataset benchmarks collaboratively.

Also, rather than spending hours and even days on preprocessing datasets Activeloop allows you to efficiently perform preprocessing steps once centrally and then be uploaded to Activeloop for others to use to make benchmarks.

Clearly, business insights generated from unstructured data are becoming more and more valuable. Yet computer vision benchmarks are not able to be generated at the same rate as computer vision data generation is increasing.

To continue developing state-of-the-art computer vision machine learning projects, efficient collaboration must occur between machine learning developers to make better computer vision benchmarks. This efficient collaboration can be facilitated with Activeloop.